This article is part of Upstream, The Daily Wire’s new home for culture and lifestyle. Real human insight and human stories — from our featured writers to you.

***

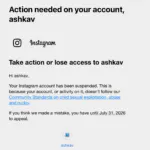

It was a normal Saturday night in suburban Pennsylvania. Ashley Kavcak, a mom of four, was sitting on the couch mindlessly scrolling Instagram like she did all the time. But on this night, everything changed in an instant when a notification popped up saying her account had been disabled.

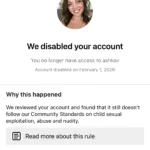

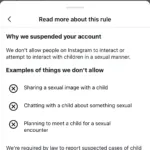

The reason made her stomach drop. According to pop up, she had been banned for violating “Community Standards on child sexual exploitation, abuse, and nudity.”

“It made me want to throw up,” she told The Daily Wire in an interview. “I started immediately thinking, what did I do? Like oh my gosh, what could I have possibly liked or done that would make them think I’m something so horrible?”

Ashley had maintained a private Instagram account for 14 years, carefully curating who could see photos of her children because she, like so many moms, was creeped out by anonymous internet weirdos. She had roughly 200 followers mostly made up of family and close friends.

She never found out what triggered the ban because Instagram doesn’t tell you.

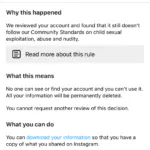

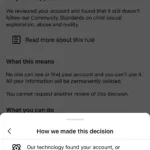

There was an option to appeal the decision, but in her case that appeal came back denied in six minutes. “At this point I don’t think there are any humans working for Meta,” Ashley said.

- Screenshots provided by Ashley Kavcak

Ashley’s case is part of a disturbing pattern affecting thousands of Instagram users nationwide. It all blew up around May 2025 when Meta reportedly rolled out new AI moderation models, which immediately triggered clusters of Instagram accounts around the world being disabled overnight. Journalists began documenting how images of cars, family photos, and art were all being improperly flagged as child sexual exploitation.

CBS Philadelphia reported that nearly 50,000 Facebook and Instagram users have signed an online petition claiming wrongful bans, with many involving false accusations of child exploitation. NBC Connecticut documented 77 complaints across NBC stations in six months, with 17 specifically for “child sexual exploitation, abuse, and nudity.”

In June 2025, a law firm in St. Paul, Minnesota, began seeking plaintiffs for a class action lawsuit against Meta for those who believe they were wrongfully banned. The question at the heart of these cases: What happens when automated systems make life-altering accusations with no visible human oversight?

For Ashley, the accusation carried particular irony. She had kept her account private from the beginning, specifically to protect her children from online dangers. She was selective about whom she accepted as followers. In recent years, she started asking her children’s permission before posting their photos.

“I was worried about predators,” she says. “Now, according to AI, I am the predator.”

When the ban notification appeared, Ashley couldn’t identify what might have triggered it. Her last post was from January 17 and included photos of her family walking on a snowy trail wearing winter coats and hats. She had recently messaged her teenage daughter about going to Hobby Lobby, which made her wonder if that innocent conversation had somehow been flagged.

“She has a teen account. I am a moderator for her account and am listed as her parent,” Ashley explained. “There shouldn’t be a reason I was flagged for that … but now I’m questioning everything.”

Instagram’s notification was frustratingly vague. “They say click here for more information, and it tells you this could be this or it could be that,” she said. The possibilities ranged from posting inappropriate content to arranging to meet a minor, none of which she had done.

The mistake sent her down the Reddit rabbit hole of researching what she could possibly do to get her account back. What she found was less than encouraging. According to other users who went through the same thing, the ban is tied to her IP address. So trying to sign up and restart with a different email address wouldn’t work.

“My email, my phone, everything has a mark on it,” she says. Users report having to buy new phones and use different WiFi networks just to access Instagram again. “The length that people have to go just to get Instagram back, it’s not even worth it,” she said.

The whole experience brought to mind the relationship people have with social media and their dependence on it always being there. With the ban, Ashley lost access to nearly 2,000 photos spanning 14 years, many of which she no longer has saved anywhere else. “There’s definitely years and years of stuff just lost forever,” she said.

Beyond the photos, she lost her primary means of staying connected with far-away family and friends, people like an out-of-state cousin who loved seeing pictures of the kids and high school friends who kept up with her family through her posts. “These people that I once talked to probably think I fell off the face of the earth,” Ashley said. “Or maybe they think I blocked or deleted them.”

She missed a friend’s pregnancy announcement. She had to text another friend to ask about a mutual friend’s surgery, which is all information that would have appeared in her feed. “Social media is where people do their updates. That’s where you share your life,” Ashley said. “You don’t even think about it.”

The experience revealed how much of modern life is built on these platforms. “You don’t realize how dependent you are on it until it’s gone,” she added.

The accusation itself also left lasting damage. Ashley immediately worried about the implications: “Do they report me? Am I in trouble with the public? Can I even volunteer at my kids’ school anymore? Like am I on some kind of list now?”

Redditors said she probably wasn’t on a government watchlist, but she still felt weird about it. She found herself adding disclaimers when explaining to people what happened. “I didn’t want anybody to think that of me,” she says. “What if somebody thinks this is true?”

The false accusation of exploiting children after spending years protecting her kids from online dangers remains deeply painful. “That’s one of those things that I’m like, well, this is ironic,” she says. “I was private to block things like this from happening.”

Perhaps the most frustrating aspect of Ashley’s experience is the impossibility of reaching a human at Meta for help.

“There are no humans,” she said. “You can’t bypass the robots to get in touch with a human.”

“I feel like this is a deeper conversation too about that aspect … there’s no customer service anymore,” she went on. “There are no people to plead your case to.”

Meta did not return The Daily Wire’s multiple requests for comment.

Ashley has sent countless emails to support addresses with no luck at all. “I’m emailing nobody,” she said. “At this point they’re probably scanning my emails and thinking I’m a robot because I just keep sending the same thing over and over.”

Meta’s approach to content moderation has created a flagging system that appears to be malfunctioning on a massive scale. The company’s operating model of “remove first, ask questions later — or never” means AI is likely making decisions with minimal human oversight, and appeals are mostly processed by bots, according to reports.

“Ordinary users are being hit with these bans completely out of nowhere — no prior warnings, no real reasoning, no chance to appeal effectively,” one Redditor observed. “And while losing photos, messages, and memories sucks — and it does — the larger issue is that Meta is slapping these life-altering accusations onto people’s digital identities without context or due process.”

The European Commission last year initiated formal proceedings against Meta for potential violations of the Digital Services Act, which requires platforms to provide clear reasons for removals and offer the chance for users to appeal.

But for users like Ashley, slow-moving regulatory action feels like it won’t help much. She just wants her account back, her photos restored, and some acknowledgment that what happened to her was wrong.

“What they’re doing is really affecting people negatively,” she said when asked what she would tell Meta if someone was listening. “We lost something very personal to us and to be accused … it feels like an invasion.”

The broader questions remain unanswered: How many others have been wrongly accused? Are any humans reviewing the appeals? Or do they just not care? For Ashley, it’s been a painful education in how fragile our digital lives are and how little power we have against the algorithms ruling the world of social media.

.png)

.png)